Are you looking for an answer to the topic “joins in scala“? We answer all your questions at the website Ar.taphoamini.com in category: See more updated computer knowledge here. You will find the answer right below.

Keep Reading

Table of Contents

How do I join Scala?

…

1. SQL Join Types & Syntax.

| JoinType | Join String | Equivalent SQL Join |

|---|---|---|

| Inner.sql | inner | INNER JOIN |

| FullOuter.sql | outer, full, fullouter, full_outer | FULL OUTER JOIN |

What are types of joins in Spark?

The Spark SQL supports several types of joins such as inner join, cross join, left outer join, right outer join, full outer join, left semi-join, left anti join.

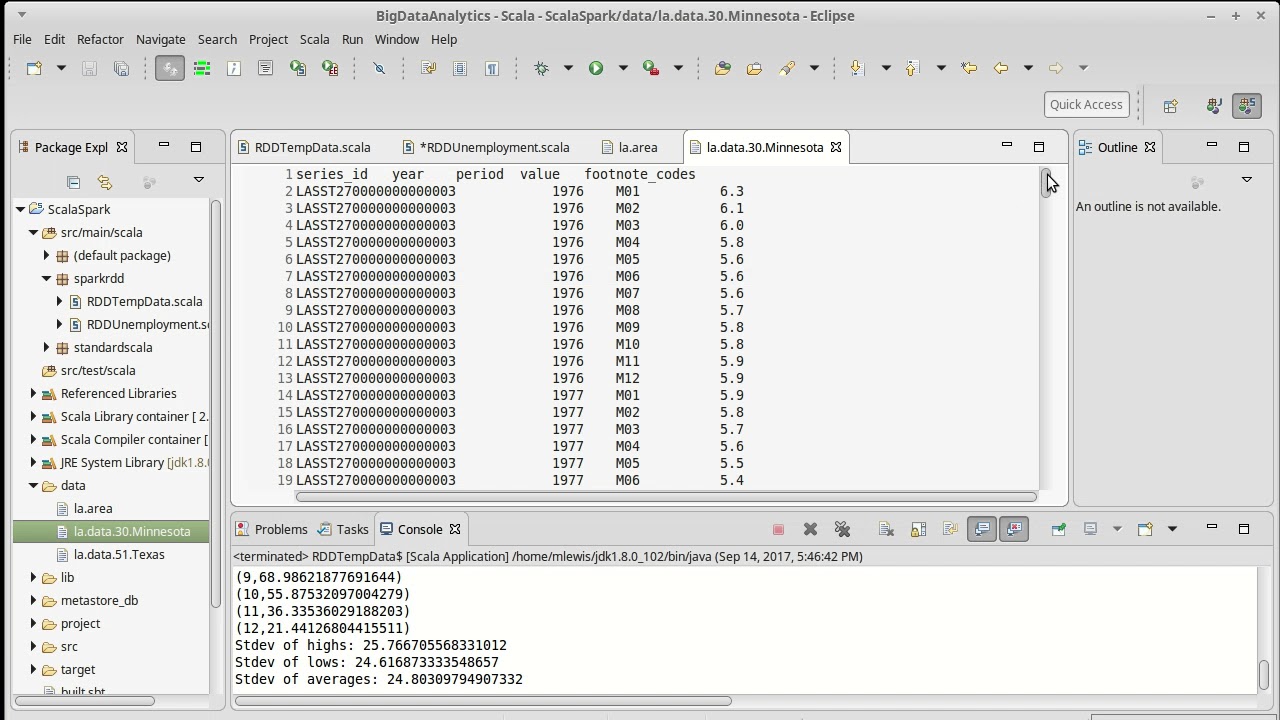

RDD Joins Part 1 with Spark (using Scala)

Images related to the topicRDD Joins Part 1 with Spark (using Scala)

What are the 4 join types?

Four types of joins: left, right, inner, and outer. In general, you’ll only really need to use inner joins and left outer joins.

How do I join two data frames in Scala?

- Using Join operator. join(right: Dataset[_], joinExprs: Column, joinType: String): DataFrame join(right: Dataset[_]): DataFrame. …

- Using Where to provide Join condition. …

- Using Filter to provide Join condition. …

- Using SQL Expression.

What is Leftanti join in spark?

A left anti join returns that all rows from the first dataset which do not have a match in the second dataset. Example with code: /*Read data from Employee.csv */

What is cross join in spark?

crossJoin (other)[source] Returns the cartesian product with another DataFrame .

What is inner join?

Inner joins combine records from two tables whenever there are matching values in a field common to both tables. You can use INNER JOIN with the Departments and Employees tables to select all the employees in each department.

See some more details on the topic joins in scala here:

Spark SQL Join Types with examples

In this tutorial, you will learn different Join syntaxes and using different Join types on two DataFrames and Datasets using Scala examples. Please access Join …

Join in spark using scala with example – BIG DATA …

Joins are important when you have to deal with data which are present in more than a table. In real time we get files from many sources which …

Dataset Join Operators · The Internals of Spark SQL – Jacek …

Dataset Join Operators. From PostgreSQL’s 2.6. Joins Between Tables: Queries can access multiple tables at once, or access the same table in such a way that …

Joining Spark dataframes on the key – Stack Overflow

Alias Approach using scala (this is example given for older version of spark for spark 2.x see my other answer) : · If you want to know more about joins pls see …

What is cross join?

A cross join is a type of join that returns the Cartesian product of rows from the tables in the join. In other words, it combines each row from the first table with each row from the second table.

How join is performed in Spark?

In order to join data, Spark needs the data that is to be joined (i.e., the data based on each key) to live on the same partition. The default implementation of a join in Spark is a shuffled hash join.

What are the six types of joins?

- INNER JOIN.

- LEFT OUTER JOIN.

- RIGHT OUTER JOIN.

- SELF JOIN.

- CROSS JOIN.

Why do we use joins?

The SQL Joins clause is used to combine records from two or more tables in a database. A JOIN is a means for combining fields from two tables by using values common to each. Now, let us join these two tables in our SELECT statement as shown below.

IS LEFT join same as join?

The LEFT JOIN statement is similar to the JOIN statement. The main difference is that a LEFT JOIN statement includes all rows of the entity or table referenced on the left side of the statement.

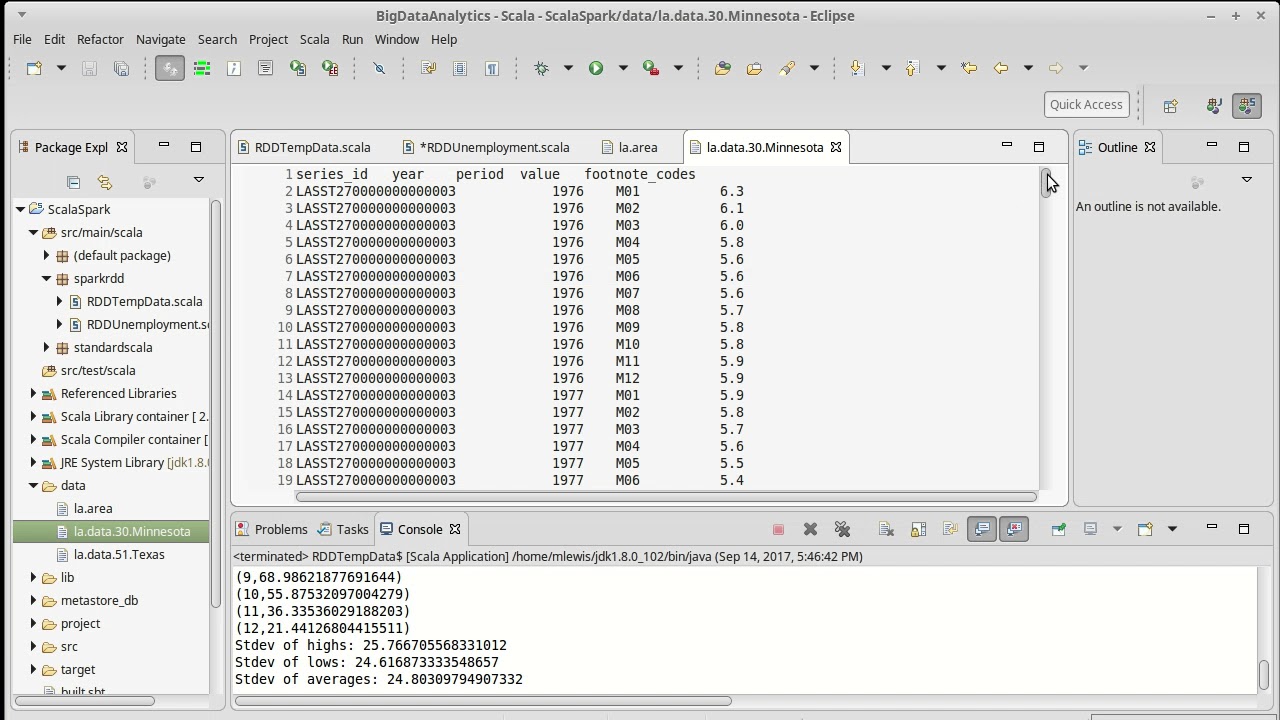

DataFrame: join | inner, cross | Spark DataFrame Practical | Scala API | Part 15 | DM | DataMaking

Images related to the topicDataFrame: join | inner, cross | Spark DataFrame Practical | Scala API | Part 15 | DM | DataMaking

How do I merge multiple Dataframes in Spark Scala?

- Spark: Merge 2 dataframes by adding row index/number on both dataframes.

- Rename column names when select from dataframe.

- Spark scala : select column name from other dataframe.

- joining two dataframes having duplicate row.

- Iterate a spark dataframe efficiently without using collect.

Is Spark join an action?

Unexpectedly, the joinDF sentence is taking a long time. Join is supposed to be a transformation, not an action.

How do you optimize a join in Spark?

Try to use Broadcast joins wherever possible and filter out the irrelevant rows to the join key to avoid unnecessary data shuffling. And for cases if you are confident enough that Shuffle Hash join is better than Sort Merge join, disable Sort Merge join for those scenarios.

What is a semi join?

Definition. Semijoin is a technique for processing a join between two tables that are stored sites. The basic idea is to reduce the transfer cost by first sending only the projected join column(s) to the other site, where it is joined with the second relation.

What is anti LEFT join?

There are two types of anti joins: A left anti join : This join returns rows in the left table that have no matching rows in the right table. A right anti join : This join returns rows in the right table that have no matching rows in the left table.

What is the difference between left join and left outer join?

There really is no difference between a LEFT JOIN and a LEFT OUTER JOIN. Both versions of the syntax will produce the exact same result in PL/SQL. Some people do recommend including outer in a LEFT JOIN clause so it’s clear that you’re creating an outer join, but that’s entirely optional.

Is Cross join same as inner join?

A cross join matches all rows in one table to all rows in another table. An inner join matches on a field or fields. If you have one table with 10 rows and another with 10 rows then the two joins will behave differently.

What is the difference between cross apply and cross join?

In simple terms, a join relies on self-sufficient sets of data, i.e. sets should not depend on each other. On the other hand, CROSS APPLY is only based on one predefined set and can be used with another separately created set. A worked example should help with understanding this difference.

What is explode in spark SQL?

Spark SQL explode function is used to create or split an array or map DataFrame columns to rows. Spark defines several flavors of this function; explode_outer – to handle nulls and empty, posexplode – which explodes with a position of element and posexplode_outer – to handle nulls.

What is full outer join?

An full outer join is a method of combining tables so that the result includes unmatched rows of both tables. If you are joining two tables and want the result set to include unmatched rows from both tables, use a FULL OUTER JOIN clause. The matching is based on the join condition.

Joins

Images related to the topicJoins

What is left join and inner join?

INNER JOIN: returns rows when there is a match in both tables. LEFT JOIN: returns all rows from the left table, even if there are no matches in the right table. RIGHT JOIN: returns all rows from the right table, even if there are no matches in the left table.

What is full join?

FULL JOIN: An Introduction

Unlike INNER JOIN , a FULL JOIN returns all the rows from both joined tables, whether they have a matching row or not. Hence, a FULL JOIN is also referred to as a FULL OUTER JOIN . A FULL JOIN returns unmatched rows from both tables as well as the overlap between them.

Related searches to joins in scala

- types of joins in spark

- joins example in scala

- scala join seq

- spark join

- spark sql join example

- multiple joins in scala

- types of joins in scala

- scala inner join

- join spark scala

- join in spark dataframe

- scala join two dataframes

Information related to the topic joins in scala

Here are the search results of the thread joins in scala from Bing. You can read more if you want.

You have just come across an article on the topic joins in scala. If you found this article useful, please share it. Thank you very much.